Imagine this: instead of typing code or writing complex scripts, you design AI models and machine learning (ML) workflows by arranging visual elements—almost like building with Lego blocks for the mind. No walls of text. No mysterious black-box lines of code. Just a visual thinking language that routes intelligence like a living map.

Why Visual Thinking Will Transform Machine Learning

Machine learning today is mostly a text-based and code-heavy domain. This creates a barrier: only those fluent in programming can fully participate. But in the next wave, visual thinking languages will become the new interface for AI—unlocking ML routes to anyone who can see patterns and make connections.

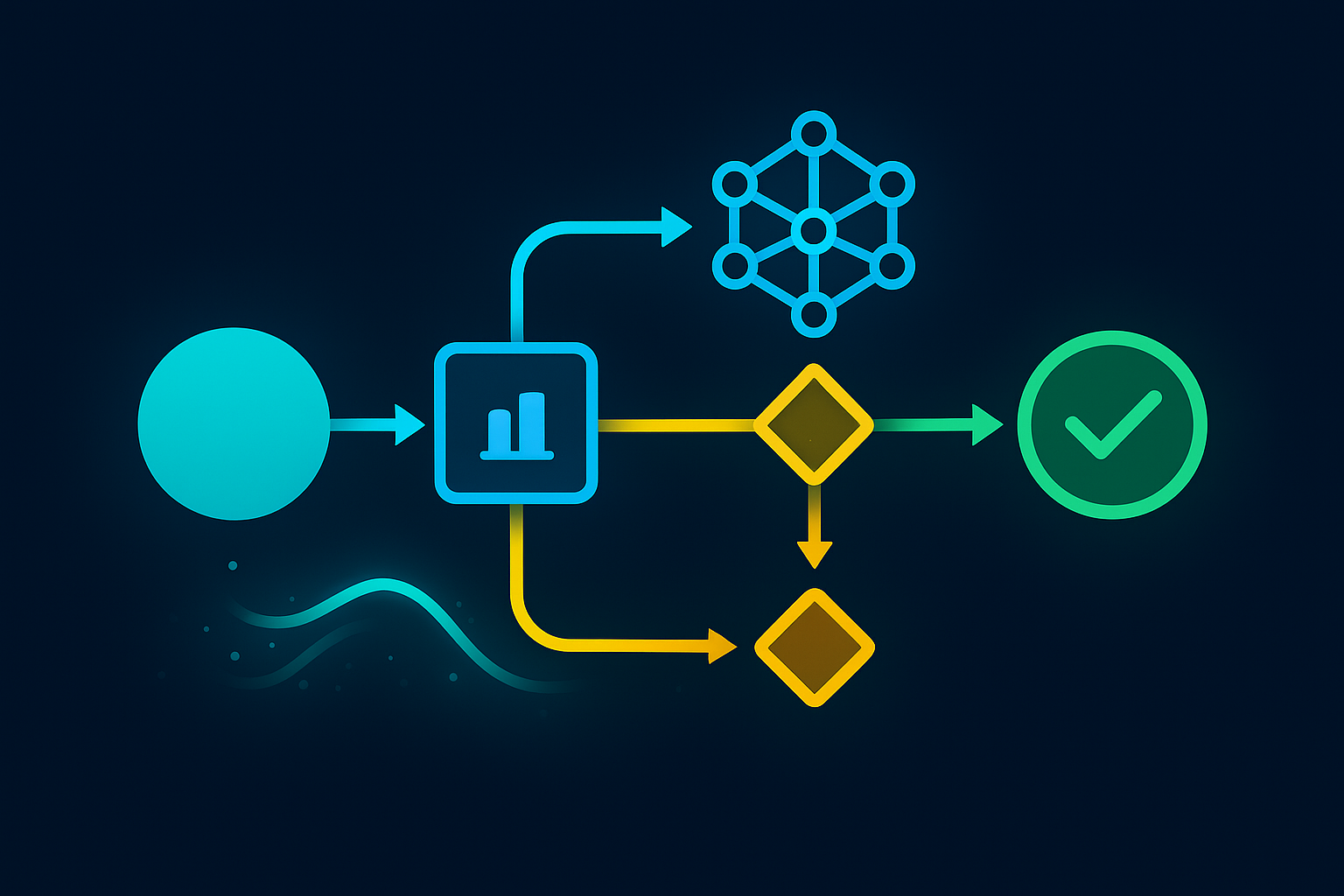

Visual thinking languages use diagrams, shapes, motion, and spatial relationships to represent ML processes. Instead of telling the machine “what” to do in words, you show it how the data flows, how the models connect, and where decision points live.

Think:

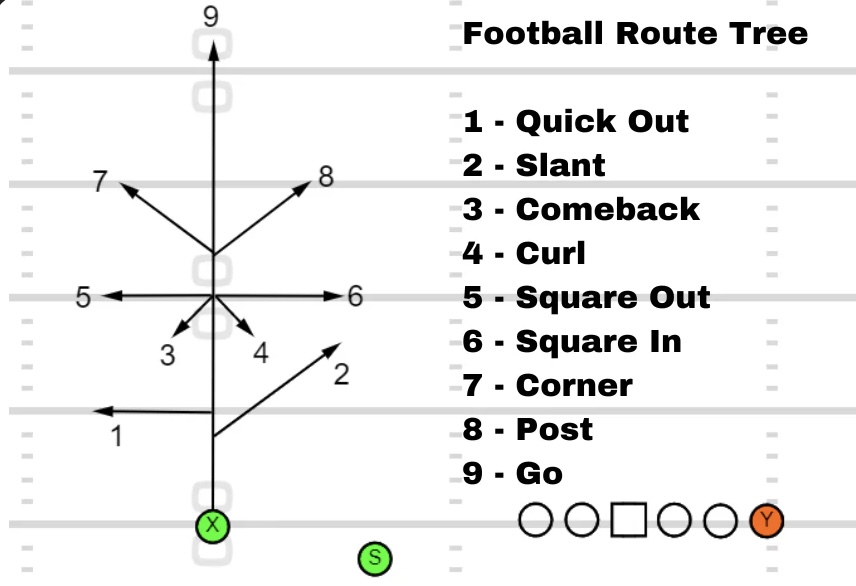

- Neural networks as branching trees you can reshape in real-time

- Data streams as glowing rivers that thicken or thin based on volume

- Decision layers as interactive nodes you can slide, merge, or split

From Linear Code to Living Maps

In a visual-first ML environment, routes aren’t just pipelines—they’re navigable terrains. Imagine zooming in to see the fine details of an algorithm’s decision-making, then zooming out to view the entire AI ecosystem at a glance.

This shift will enable:

- Rapid prototyping: Drag-and-drop components to test new routes instantly.

- Multi-sensory debugging: Colors, shapes, and motion show where models fail or excel.

- Collaborative AI design: Artists, analysts, and engineers can work in the same space without needing a shared coding language.

Why “Routes” Matter in the Visual Future

In traditional ML, we talk about “pipelines” or “workflows,” but future AI will feel more like transportation networks—data taking different paths depending on conditions.

A visual thinking language for ML routes will make these paths explicit:

- Dynamic rerouting: Models automatically shift based on new inputs or performance.

- Parallel highways: Multiple models running side-by-side, visually represented like freeway lanes.

- Traffic visualization: Real-time metrics on data load, latency, and decision bottlenecks.

Building a Universal Visual Language for Machines

For visual thinking to work at scale, we’ll need a universal grammar for AI diagrams—symbols, colors, and shapes that mean the same thing across platforms. Imagine an “emoji set” for machine learning:

- Circles for data pools

- Arrows for routes

- Pulses for active training

- Glow effects for uncertainty zones

With standardization, these diagrams could be read by both humans and machines—becoming the bridge between thought and execution.

The Endgame: Thought-to-Route Interfaces

The holy grail? Direct brain-to-AI visual mapping. Your thoughts create the route diagram in real time, with the system translating your mental “sketch” into an operational machine learning model. We’re still far from that point, but the foundational tools—augmented reality, spatial computing, neural interfaces—are already emerging.

In short: the future of machine learning routes isn’t just faster or smarter—it’s more visual, more human, and more intuitive. And when that happens, AI won’t just be something we program—it will be something we design by sight.